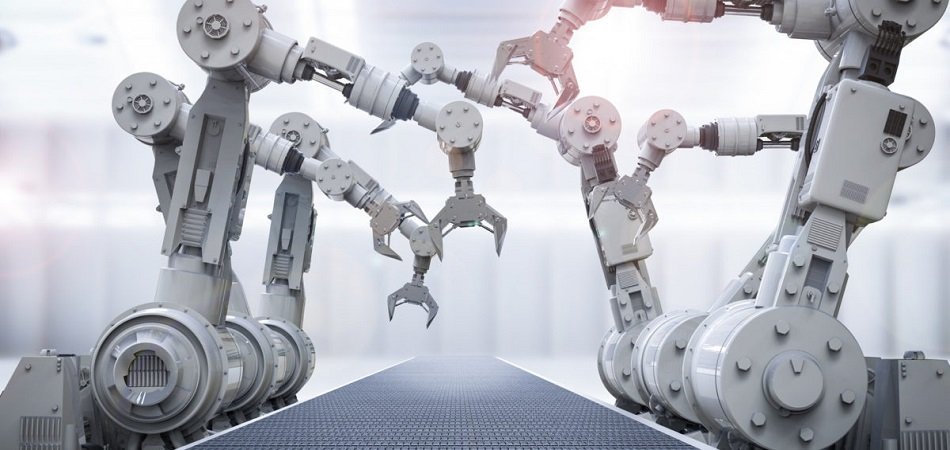

This week, we speak with arguably one of the best-known folks in the domain of neural networks: Jurgen Schmidhuber. He’s working on a lot of different applications now in heavy industry, self-driving cars, and other spaces.

We talk to him about the future of manufacturing and more broadly, how machines and robots learn. Schmidhuber uses the analogy of a baby learning about the world around it. He has a lot of interesting perspectives on how the general progression of making machines more intelligent will affect other industries outside of where AI is arguably best known today: consumer tech and advertising.

If you’re in the manufacturing space, this will be an interesting interview to tune into. If you’re just interested in what the next phase in AI might be like, I think Schmidhuber actually frames it pretty succinctly.

Subscribe to our AI in Business Podcast with your favorite podcast service:

Guest: Jurgen Schmidhuber, co-Founder and Chief Scientist – NNAISENSE

Expertise: Artificial intelligence applied to manufacturing

Brief Recognition: Schmidhuber earned his PhD in Computer Science in 1993. He is a professor at the University of Applied Sciences and Arts of Italian Switzerland and Professor of AI at the Università della Svizzera Italiana. He is also the Scientific Director at IDSIA.

Interview Highlights

(04:00) Could you paint a picture of the current state of machine learning and deep learning?

JS: At the moment, almost all AI and deep learning has a balance passive…For example, you speak to your smartphone, it has an LSTM which recognizes speech and other networks that recognize images and fingerprints and whatever and all of that is passive in the sense that your smartphone doesn’t have fingers like a robot.

Now active AI is when a robot or some other kind of an active process interacts with an environment and shapes the data that is coming in through its own actions. That’s what babies do and machines that have all productions that control industrial processes, machines that make T-shirts, that makes shoes, that make all the stuff that you see around us.

(05:00) Where is there more opportunity in manufacturing?

JS: Most of the profits in AI today are really in marketing and selling ads, and you are interacting with some platform and it uses the data that it gets from you to predict which articles you want to read next and which ad you are most likely to click on next and so on. So all of that can be done by this passive pattern recognition technique.

Now, in the not-so-distant future, we will have some things that we don’t have at the moment. They’re going to be a little robot that we teach like a kid to do something complicated, such as assembling a smartphone. At the moment, you need humans that do that. How will it work? You will say, “Look here robot, look.”

You’ll just talk to it and interact with it. You won’t have a data glove or some fancy equipment. No, you will talk to it like you talk to a kid and you will say, “Let’s take this slap of plastic here and look, let’s take the screwdriver like that and now let’s screw in the screw like that. Hey, not like that. Like that. Not like that, like that.”

Then after some failures, we’ll be able to do it for the first time and then by itself…to figure out how to do it more quickly with less energy. And finally, it will be able to do it much better than I could do it. And once it’s doing it really well, then we freeze the learning process and we make a million copies and license it and that’s going to change almost every activity in our economy.

(09:30) Do you think that there will be traction with sensors for the most part or supervised learning, the baby analogy?

JS: There will be a role for supervised learning, no doubt, but it’s limited in many ways. The most exciting tasks are those where there is no human teacher who knows how to do it in a good way. There are so many industrial processes currently controlled by machines, where there are a bunch of knobs and a couple of experts who sometimes try new constellations of these knobs to figure out what is a good constellation.

For example, in the chemical industry, you have big vents and inputs, chemical substances…You have a bunch of sensors, and they give you a very incomplete picture of what’s happening in these vents. There’s incomplete burning and nobody knows what is the best way of injecting at what times these additional catalyzes and stuff like that. So they’re very interesting and very complex processes where no human knows the optimal control.

So you want these machines to…figure out by themselves how to optimize these processes and how to create more of the interesting product with cheaper ingredients, for example. So all these questions, well at the moment the supervising human teachers also don’t have a good answer. And that’s the most exciting part of it.

Just look at yourself. How did you learn to become a smart being? You didn’t download data from Netflix or from Facebook or something. No, you played around with toys, and you invented your own experiments…that’s how you learn to predict what’s going to happen if [you do something].

You got an intrinsic reward for coming up with actual sequences, with experiments that lead to data that has a new interesting pattern. Interesting in the sense that there is some regularity which you didn’t know yet, and now suddenly you will know it because your learning system is acquiring this regularity and you can measure the depths of the insights that the learning system has. This becomes a reward for the part of the learning system that is generating the actual sequences, the experiments.

You have to probe some world like a scientist and these little babies, they are little scientists, and they continually expand their horizon and learn new things that they didn’t know yet. Like apples fall or other objects…make the same noise after a certain predictable amount of time when you throw them to the ground. So they learn all kinds of regularities and the same is true for these artificially curious machines.

(15:00) Where do you think that traction will take for AI in manufacturing?

JS: Let me give you an example. When we started our company, Masons, in 2014, all the investor calls came from the Pacific Rim and this is greatly changed because many overseas European makers of machines have woken up and have realized the truth of what I mentioned before, since the next big wave of AI is really active AI and AI that is controlling machines.

Suddenly we have substantial investments from old industry realizing that their old control processes are going to be transformed. For example, one thing which is already public is an investment by Schott. Schott is a leading maker of glass.

You have it on your smartphone…little lenses now, billions of these little lenses are all over the world and they are really quality glass. Now, to make good glass you have to do a lot of things right and these guys have more than 100 years of experience, and that’s the reason why they know how to do it well. But they are realizing that this is still far from optimal as far as we can judge and that baby-like AI that can learn by generating its own experiments…should…further improve these processes not only for making glass but for all kinds of chemical reactions and industrial processes.

In the end, everything, all of the material around you, the tables, the chairs, everything you see around you, everything that has been made by some machine with the help of additional humans that helps the machines to do it well—all of that is going to be affected because more and more of the complex stuff that currently can be done only by humans is going to be done by active learning machine.

(18:00) Are materials where traction could begin or do you think it’s just as likely that such an approach would be involved in the full iPhone process?

JS: I think you will see the baby steps in all kinds of different applications. At some point in the not so distant future, the first killer robot applications would come. At the moment, all the robots have really small mass production numbers; there’s no robot that has been produced a billion times.

Maybe the first robot is going to be a toy robot, a little fairy thing and it will have eyes and ears and it will listen to you, but it will not be mission critical. I’m not sure who is going to make it first. It might be some Japanese company. But something that is active that shapes the incoming data through its own actions and in that sense is much more sexy than what you have on your smartphone, which is passive pattern observation.

(20:00) Do we need big numbers to make this kind of robot?

JS: Not really. So I just gave that example because at the moment the only stuff that is copied a billion times is software and it’s easy to do that. It’s really hard to have a market-oriented product that is so attractive that a billion people want to buy it such that it’s really something physical that you want to have in your home. And the number of robots of a certain type at the moment is at most a million, often much less than that or through thousands.

Subscribe to our AI in Industry Podcast with your favorite podcast service:

Header Image Credit: Burning Glass Technologies