Our AI Opportunity Landscape research clearly demonstrates how chatbots are relatively over-hyped in the marketplace, and most buyers drastically overestimate their effectiveness as a result. Some of our press releases about the bloviated claims about chatbot deployments have ruffled the feathers of conversational interface vendors.

The purpose of this article is to help leaders think realistically about some of the challenges and opportunities of deploying conversational interfaces in financial services. The lessons below are from the data produced through our AI Opportunity Landscape research as well as direct interviews with chatbot experts in insurance, banking, and wealth management.

Determining the Business Objectives of a Chatbot

It’s important to begin with a clear idea of the business objectives, or the types of ROI, we’re looking to deliver with our chat or conversational system. Unfortunately, leaders too often come into the conversation with preconceived – but misguided – ideas of what this would be for a chat application. This might be something rather vague, such as “improve customer experience,” or something very specific but not specific, such as “saving costs.”

We’ll use the example of a customer service chatbot in retail banking as a representative use-case. Though there are other use cases we could use – such as internal Q-and-A chatbots for employees, HR data, other kinds of search applications, sales enablement, etc – we’ll run with this one to help make our point today.

It’s important for leaders to have a broad understanding of the capabilities of chatbots and the challenges of implementing them. We’ve done a number of interviews on this topic – interested readers may want to listen to the following episodes of our AI in Financial Services podcast:

Subscribe to the AI in Financial Services podcast wherever you get your podcasts:

Below we break down some of these objectives in a way that would be convenient and useful for business leaders. There are three categories and a couple of different types of ROI for each:

Customer Experience

Faster Response

Improving the speed of response for customer service is one measurable benchmark that we might use for an AI system.

In this case, it might be possible to have a chat application that can handle simple requests and answer rote questions that retail banking customers often ask, the so-called “low hanging fruit.” This could be:

- “How do I reset my password?”

- “What’s my checking account balance?”

- Etc…

In order to realize viable return on investment, a cross-functional AI team within the bank would have to identify the most repetitive questions they receive via chat and voice channels and determine which of those is bounded and understandable enough to reliably train a machine to respond to. We’re looking for the intersection of (a) technically possible to respond to with AI, (b) valuable to users, and (c) valuable to the business.

For example, there might a dozen ways to ask “what’s my balance.” Identifying these variations on a theme and including them in the database of a chatbot application can make this challenge conquerable.

However, when it comes to more complicated questions such as “how much have I spent on groceries in the last two months?” has many more permutations and would probably be less boundable.

By understanding the most boundable and most frequent questions, we’ll be able to showcase how many of these “low hanging fruit” can be picked for faster and more effective responses better than even human agents.

Human agents are subject to errors. They might not always remember or know the correct information when customers ask particular questions about policies or products such as current interest rates or fees that apply to certain accounts. At the very least, human agents might take a longer time to find the information they need compared to chatbots that can process the information much more quickly and consistently.

Of course, it would require training chatbots to be able to more consistently get the right updated message across and this is often more complicated than it seems. A query that would be comprehensible to a human agent might not be so for a chatbot that does not have that particular query available in its database.

The bottom line is chatbots can be trained to handle some customer transactions more quickly and accurately than human agents, but only if these transactions are boundable and understandable to a machine.

If improving time-to-response is the goal, we can measure the time it takes for a customer to receive a first reply, before and after the chatbot. We might also look at time-to-resolution, and measure the before-and-after time for a customer to receive a resolution.

Speed alone is unlikely to be the only criteria of success, but it’s a tangible, specific benchmark that we can anchor our success on.

Self-Reported Customer Satisfaction

Many financial institutions allow customers to score their customer service experience. Maybe it’s a 1-5 scale of satisfaction, done by phone, email, or text. Maybe its a “yes” or “no” response when a customer is asked whether their last customer support interaction handled their issue. In either case, this benchmark of satisfaction is something we can use to experiment with chatbots.

In an interview we conducted with Chief Scientist at Lexalytics Paul Barba, we asked him about how companies are using natural language processing, and what it takes (in terms of expertise, time, and training) to get these systems working. He walked us through interesting and fruitful use-cases that shed light on the back-end “tweaking” required to keep NLP productive in a changing business environment. Basically, he talked about how NLP approaches could be used to comb through chat logs and identify common issues and challenges of customers as well as topics associated with customers, positive sentiment versus customers, and negative sentiment.

We might be able to break this out across different strata. For example, we might be able to determine what tends to make older senior citizen clients angry. We might be able to identify the sentiment difference around certain kinds of calls or questions and how different demographic groups respond to the chat interface. Maybe younger folks find it a more positive and more immediate response than for older folks, who find it more of a frustrated response.

Being able to learn about our different customer groups, about what they like and what’s upsetting them is a key function that a chatbot might be able to help us do. Not only can it potentially help us actually tackle questions, but natural language processing can be used on the backend. We can learn from chat conversations on how to improve the customer experience in a general way.

We may realize that even though a chatbot is delivering a faster time-to-resolution on certain categories of questions, more customers report a negative experience. In other cases, the chatbots might deliver a reliably better support experience for customers. We need to have baseline measurements in place in order to do this investigating these differences and doubling down on what’s working.

Cost Reduction

Another potential business objective would be improved efficiencies or cost reduction.

For instance, when growing a new branch of a bank in a new geographic region, the employment of a chatbot might effectively grow the customer service function without growing headcount and payroll costs. A conversational interface might also reduce the need for overseas call center agents, reducing overall outsourced services expenses.

Along that same line, using an AI-powered chatbot to handle initial contact with customers would free up some customer service agents to do other kinds of productive tasks within the bank, such as sales calls. This would reduce the overall cost of the customer service function in a broad sense.

At present, most conversational systems do not seem to be resulting in headcount reduction, but applications done right should – at a bare minimum – be able to scale customer service response with less added employees (or outsourced agents) than might normally be required. This should be measurable. At the onset of a chatbot project, a bank would need to determine what cost savings metric or benchmark it wants to adhere do. For example:

- Do we know how many FTEs or outsourced agents are necessary when we grow our customer base by X% – and can we determine if that headcount increase can be reduced meaningfully by a conversational interface?

- Would we be able to quantify the number of reduced human hours spent on customer interactions, and attribute that to staff reduction in call centers or the customer service function generally?

Improved Revenue

Increased revenue is also a viable goal for chatbots in financial services – though it is less common than cost reduction and improved customer experience.

One is to reduce churn and improve retention by using the data captured during interactions to determine which of those interactions might be attributed to improved customer retention. Conversational data can also be used to identify customers at churn risk and take actions to re-engage them with the brand such as sending them special offers through email or text messages that the data shows they would appreciate.

Another revenue-related chatbot application might involve cross-selling and up-selling. This is not to say that the chatbot would be expected to close a deal for a business checking account or a new loan, which is extremely unlikely. Rather, the chatbot could detect customers with the highest likelihood to purchase additional products or services, and conveniently hand those customers off to agents who are most likely to (a) handle their needs, and (b) sell them on whatever services the user seems to be most likely to buy (based on demographic information about the user, or simply based on the expressed needs or statements of the user).

To determine effectiveness, it would be useful to compare the success rate of closing the deal in opening a business checking account or a loan when routed through a chatbot first, or to a human agent first. This might also be a good way to measure ROI.

It’s important to note that there’s a lot more potential ways we could measure ROI and every business is going to have different considerations for what’s important for them. Part of our work with enterprises is exploring the kinds of ROI that one could reasonably expect given the data and resource constraints of the company – and to determine the metric benchmarks that projects should be held accountable to.

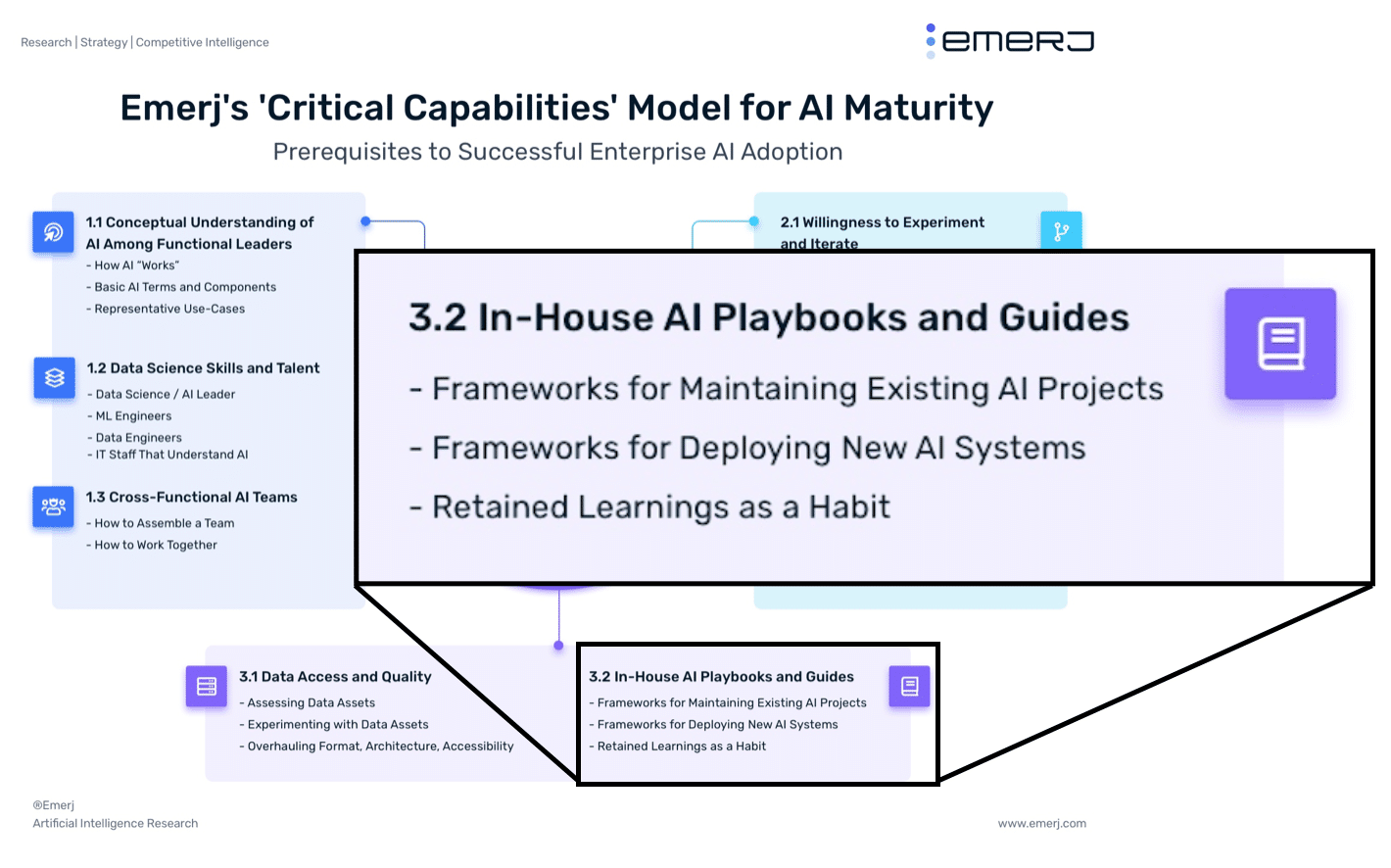

Building a Cross-Functional AI Execution Team

Some degree of cross-functional team will need to be involved in assessing viable use-cases for a chatbot – and viable measures of success. However – an even more important and long-term collaboration will need to be established

This will normally involve:

- Dedicated subject-matter experts – Usually customer service specialists, who understand the customer’s needs, the conversational flows of the company, and how customer service issues te to downstream business impact.

- Dedicated data science talent – Data scientists don’t understand subject-matter data. An AI PhD from Carnegie Mellon doesn’t mean you know the first thing about customer service requests in banking. Data science talent will need to learn through “osmosis”, metaphorically shoulder-to-shoulder with subject-matter experts who understand the IT issues related to customer service, and the customer service flows and processes of the business. Intelligent hypotheses can’t be developed otherwise.

- IT champion – While multiple full-time IT folks may need to be a part of any AI transformation project (chatbots no exception), there must at least be a champion within the IT function. IT is notoriously at odds with AI initiatives, because AI projects typically hurl a variety of new issues and demands upon an already overwhelmed IT staff. Without an IT champion who believes in the potential of the AI project, and who can communicate this value to IT leadership and staff – projects may run into too much IT friction to ever come to life.

- Business champion – This is unlikely to be the decision-maker who actually cuts the check, but it should be someone who (a) believes in the project and wants to see it through, (b) has a conceptual understanding of AI adoption and AI use-cases, and (c) can communicate (read: translate) with said decision-maker.

(Note: Emerj Plus members can learn more about the role of non-technical subject matter experts in our article titled Applying AI in Business – The Critical Role of Subject-Matter Experts.)

Failure to involve IT and failure to bring on dedicated subject-matter experts are common reasons for unsuccessful chatbot initiatives. The specific balance of a cross-functional team will vary from project to project, and the talent needs of the team will evolve over the life of the project. The initial heavy lifting to label data and train an algorithm will likely require a larger team than the ongoing maintenance and exception-management needed to keep such a system up and running once it’s been set up.

The team must determine a cadence of communication and collaboration on a daily basis, as well as another cadence of communication with leadership, IT, or other parts of the business. Any agreed-upon cadence of meetings and communication is likely to change once a team gets immersed in working on the problem itself. However, going in with a cadence to begin with is much better than learning (the hard way) that projects fall apart quickly without such a cadence.

The smartest AI adopting companies realize that the ways these cross-functional teams are built, and the way they communicate (with themselves and to other groups, such a business leadership and IT) are key lessons that need to be retained as “playbooks” or “best practices”, and potentially applied to future AI projects.

Perform a Data Audit

Business leaders would also need to carry out a data audit, which involves looking through all of existing chat logs or call center logs to get an understanding of the kind of information available. It would also involve determining the accessibility of the data in a format that has been organized and understandable, and if there is any history that the business has used natural language processing (NLP) in a productive way.

A data audit would take a look at the following:

- Business Considerations – Relevant use cases and established ROI would inevitably get much more in-depth look after the requisite team members has been pulled together and can really think about the project from different perspectives. In many cases, for example, ROI projections can change once the right experts are on board and there is more understanding of the available data.

- Training the Model – It would be necessary to train the model based on the identified bounded set of use cases and the conversational flows. It would then be possible to test the system in an isolated environment and see if common kinds of responses and chat messages get the desired results.

- Test the Model – After doing some internal testing, it would then be possible to do a beta testing through limited exposure of the working model to customer interactions and then deployment.

- Deployment – Once a successful test of the model has been carried out, it would then be possible to actually integrate the system into the customer service flows and make it part of the daily work process.

Deployment is actually a multiphase process, and it is easy to get it wrong. In fact, AI deployment is done wrong over 90% of the time for a variety of reasons, and the costs are gigantic. To avoid making the same mistakes, you can find out about the full process of actually deploying a chatbot in our AI deployment roadmap, which will help you determine exactly what team members need to be part of a project, all the various phases that you need to go through to bring AI from an idea to full deployment.

Conclusion

Deploying a conversational interface isn’t easy – as many financial institutions have found out the hard way. Leadership must develop a reasponable understanding of what the technology is actually capable of – and cross-functional teams must realistically determine how project success should be measured, and which use-cases are even viable for chatbot applications.

We are years away from machines being able to replace human customer service agents entirely, but with a realistic and well-rounded approach, banks should be able to use NLP and conversational interfaces to:

- Reliably route customer service inquiries to the right human agent at the right time

- Reliably handle extremely repetitive “low hanging fruit” applications

- Improve IVR and phone interactions to bring customer inquiries more quickly to resolution

The technology is still in its nascency, and success is far from guaranteed – particularly for ambitious projects that aim to automate large swaths of customer service interactions. There are no plug-and-play solutions, and hopefully this article has provided readers with an understanding of the challenges to overcome and business processes required to see a chatbot initiative through properly.